Slimes

March 29th 2021

Slime Generation

Slime Generation on the GPU from scratch

If any gifs jitter that's because my upload speed sucks and the files are quite big.

First things first:

A quick preview:

Youtube would like to talk to you about compression

Youtube would like to talk to you about compression

Setting up

Beginnings

I was browsing youtube, when suddenly this video was uploaded. I watched it, wanted to do the same but didn't want to learn unity (Which is what he used as far as I know). So I decided to learn CUDA instead.

Tools / Libraries

So after setting up a basic C++ project and linking GLFW, GLEW, and ImGui I could finally open a window and render to it using OpenGL and have fancy on-screen menus with ImGui.

Drawing & Computing

The next step was to add a CUDA compute shader which renders stuff. This is achieved by just using a four byte value for each pixel. Then we need to transfer the pixel data from the Compute shader to a OpenGL texture so we can render it. This is also really easy thanks to CUDA and OpenGL interoperability.

(Protip)

If you don't want to spend three hours debugging why it's not rendering, consider checking if you're calling your render method

Computeshader adventures

Blurring

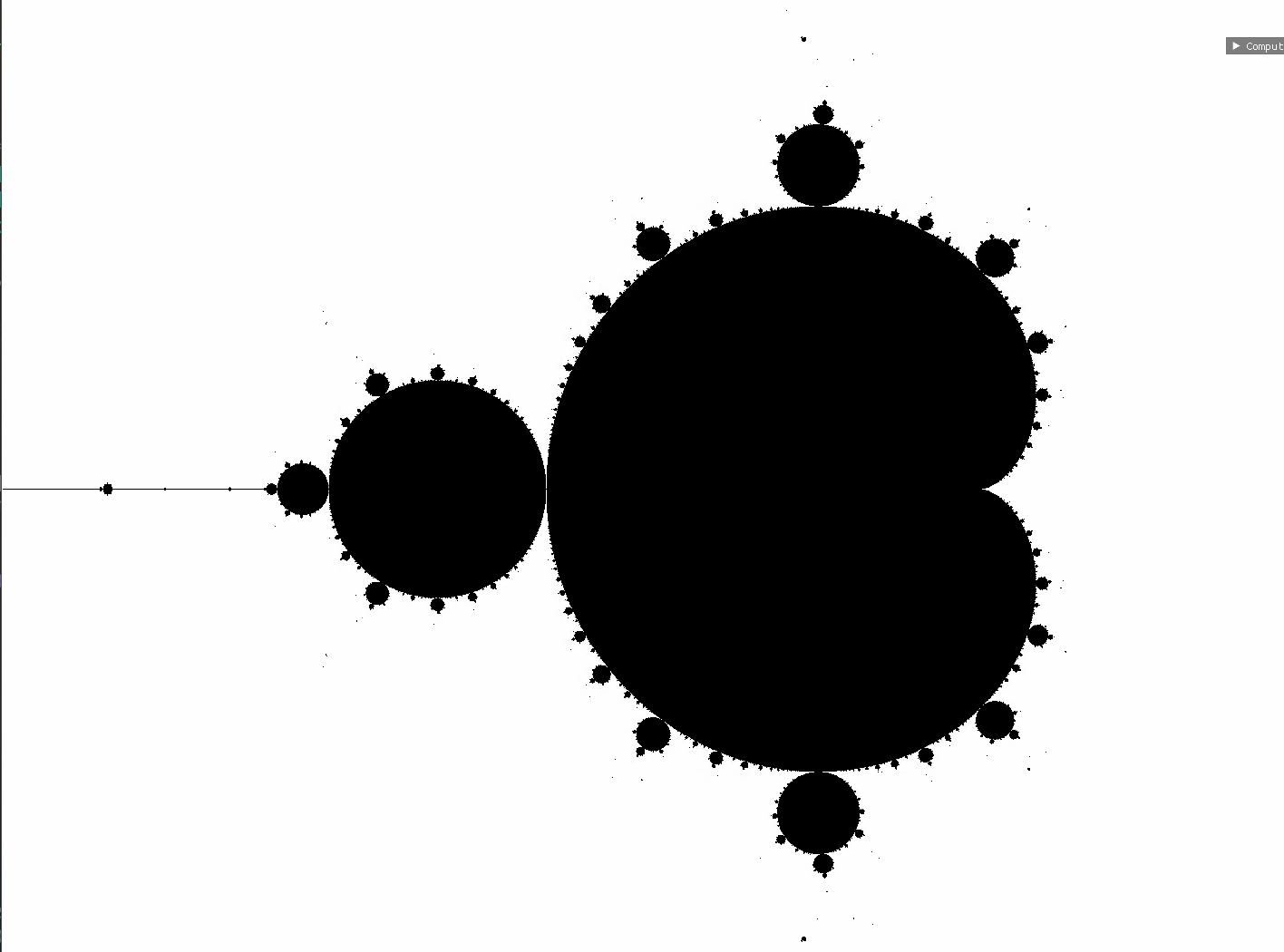

After I was this far I first used cuda to render some fractals (Mainly Mandelbrot and Julia sets that is). I mean you all know how it looks, but here it is anyway:  .

Then I started to work on replicating the effects of the video. First I created a CUDA function to blur the image using a Gaussian blur. A very early, broken version looked like this:

.

Then I started to work on replicating the effects of the video. First I created a CUDA function to blur the image using a Gaussian blur. A very early, broken version looked like this:  Obiously the edges can't be blurred using Gauss, so I decided it will just have a black perimeter. Also in this version I forgot to change a '+'' to a '-' so it only propagated downwards instead of upwards too.

Obiously the edges can't be blurred using Gauss, so I decided it will just have a black perimeter. Also in this version I forgot to change a '+'' to a '-' so it only propagated downwards instead of upwards too.

Agents

After fixing those bugs the next goal was to create some moving agents which leave a trail and applying the blur to said trail. The first version looked like this:  the next already looked like this:

the next already looked like this:

Smarter agents

The next step was to make the agents smarter by letting them follow the trail left by other agents whenever possible. This was achieved by simply sampling the region in three direction and then steering towards the most favourable direction possible. The steering amount is random though. The results were already astounding. Although every agent has the same speed and the edges are stick (for some unknown reason) I decided to leave it at that and play around with that a little bit.

Playing around

Now all that was left for me was to play around with it. First I added some options to play around while the simulation is running  then some settings concerning steering and sensors:

then some settings concerning steering and sensors:  But I guess it's more fun to play with the settings yourself. So there's source code for all of this of course (Including commits containing dumb bugs :P)

But I guess it's more fun to play with the settings yourself. So there's source code for all of this of course (Including commits containing dumb bugs :P)

PSA

Notes

All of the gifs above contained a bug where the red color channel was mistakenly set to a higher value than it should be because two variables had the same name. But the effects look nice nontheless. Maybe even better, who knows

Source code

The source code is no longer available :(. The build process should be straightforward. Note that this only has been tested on Linux and no guarantee is given that it works on your machine.

< PolyRing | TCTF >